Doing local development with Node is simple. All you have to do is node app.js in the folder where your source code is and your application is ready to serve.

Where things get complicated is when you want to put your app in production, on a web server for the whole world to admire it.

If you are coming from PHP or Ruby on Rails you might be used to having a very simple way of hosting & deploying your application. All you have to do is put your code into a specific folder. Every time you have an update, just replace the code. It just works.

In the world of Node it can be a little bit more complicated but it is worth it. Let’s look at how you can host and deploy a production ready NodeJs application.

First, you will need SSH access to a freshly installed server. In this article you will see how to do that on CentOS 7.

All steps are easily reproducible on any other flavor of Linux. If you are interested in Ubuntu we have another article, check the links at the bottom.

Your server can be running in AWS, Rackspace or even in your local VirtualBox. It doesn’t really matter, the steps are always the same.

Getting your hands dirty

I know that you are impatient to learn what the setup will be, so let’s get this out of the way.

First, we will use the Nginx to handle all web requests. Your Node app will not be exposed directly to the web, everything will go through Nginx.

Requests for static content will be handled directly by Nginx. They will never reach your Node application.

If we need to support SSL/TLS, it will also be handled by Nginx. The node application doesn’t even need to know whether there is SSL or not. It’s not its business.

All other web requests will be handled again by Nginx but forwarded to our Node application code.

Second, to ensure that our node application is always on, even when the application crashes or the server is restarted, we are going to create a systemd service.

Last, we will launch as many instances of our application as cores and cpus are available on our server. The purpose is to use the maximum of the available resources.

Each instance will listen for requests on a separate port and Nginx will forward the appropriate requests. Basically Nginx will be also a load balancer.

If you don’t understand this setup or you’ve never heard some of its components. Don’t worry, everything will be explained in details below.

On the other hand if all of this is very clear to you, you can skip the next few paragraphs and go directly to the actual commands and files that we will use to make it all work.

What does it mean to host an app?

Just like on your development machine you can run node app.js on your server and your code will be executed perfectly.

However, this is far from ideal and there are many problems with it. Let’s look at just a few of them.

Serving static files, like JavaScript, images & CSS, can be done with node but it is not very efficient. It will use too much memory and it might not cache frequently accessed files, which in result can make your Node app slow or even crash it.

Establishing secure SSL connection is not simple, yet most web applications require it. There are certificate files to handle as well as other small details. Moreover, your application code does not care whether the connection is secure or not, as this will only add unnecessary complication to your logic.

Limiting file uploads is critical for any app which allows file uploading. Otherwise, unintentionally or intentionally a user may try to upload a 100GB (or much bigger) file and crash your server. Implementing this efficiently in Node is hard.

These are just few of the reasons, and there are many more, why you should have something else in front of your node application which will handle user requests.

It should serve static files, establish secure connections, as well as other things and decide when a request should be handled by your application code and when not.

Nginx is one of the most widely used web servers. It can do all of the above and lot more. In addition, it is very easy to setup. That is why we are going to use it.

Use all available resources

Node is famous for being a single threaded process. What this means is that no two things happen at the same time. If your server has multiple cores or processors it will only use one of them.

This is not very efficient. If you can use all of the cores you will be able to handle higher load with the same server and as a result save some money.

One solution is to write some additional code. Node ships with a cluster module which can handle the situation described above.

However, I believe it is better to keep in Node only your application logic, and handling multiple processes is not part of that.

Instead all you have to do on your server is run node app.js multiple times, each time providing a different predefined port the app to listen to. Then Nginx can forward requests to each of these ports.

Always On

The next problem that you will face is to keep your app always on. It may crash or your server may restart or something else could happen. You need to ensure that no matter what, your app is always running.

Moreover, this should happen automatically. You don’t want to wake up in the middle of the night, just because your server restarted due to temporarily power outage (this happens even on Amazon or Rackspace servers).

Fortunately, all Linux instances come with what is called an init system. These are robust systems which can monitor the status of your application, restart it when certain conditions are met and start it when the server itself is started.

In the past, using the init system required writing complicated scripts and everybody hated it.

These days, most modern Linux distributions come with a modern init system called “systemd” which is very easy to use.

Deploying

Now that you know how your are going to run your code, the next question is how you are going to put your code on your server.

We are going to use Git. It has many great features but we are going to use just a few of them, mainly it’s ability to code push changes between computers.

Now that you know how it should work in theory, let’s look how it is done in practice.

Install

First, you will need to install all necessary packages on your server.

Enable the Extra Packages for Enterprise Linux (EPEL) repository on CentOS where some of the packages that we require live.

$ sudo yum install epel-releaseNginx

To install Nginx, run the following in your terminal

$ sudo yum install -y nginxTo make Nginx start when your server starts

$ sudo systemctl enable nginxTo run Nginx right now

$ sudo systemctl start nginxAfter this last command you can open your browser and point towards your machine and the default home page of nginx will welcome you.

Node & NPM

Installing Node requires the following

$ sudo curl -sL https://rpm.nodesource.com/setup | sudo bash -

$ sudo yum install -y nodejs

$ sudo yum install -y gcc-c++ makeThat last line is required for some npm packages which build native modules.

Git

$ sudo yum install -y gitAfter this line you will have Git installed on your CentOS server.

Now we have all necessary tools installed. Next step is to put a simple node app and then configure the server.

Putting some code on your server

For the purpose of this article we are going to use our base-express repository. It’s a repository that anyone can use to start his Node web project with the Express web framework.

$ cd /opt/

$ sudo mkdir app

$ sudo chown your_app_user_name app

$ git clone https://github.com/terlici/base-express.git app

$ cd app

$ npm installI prefer using /opt to contain my application files, but you can choose any other folder.

Now our app is ready to run and all of its modules are installed.

Little customization

Let’s customize a little bit our application. You will see later why.

First replace the app.js file in the root of the folder with the following

var express = require('express')

, app = express()

, port = process.env.PORT || 3000

app.set('views', __dirname + '/views')

app.engine('jade', require('jade').__express)

app.set('view engine', 'jade')

app.use(require('./controllers'))

app.listen(port, function() {

console.log('Listening on port ' + port)

})After the changes our node application can no more serve by itself its static files in the public/ folder.

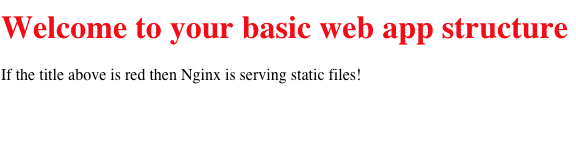

Next, please replace the views/index.jade with the following

doctype html

html

head

title Your basic web app structure

link(href="/public/css/style.css", rel="stylesheet")

body

h1 Welcome to your basic web app structure

p

| If the title above is red

| then Nginx is serving static files!The last file to change is public/css/styles.css

h1 {

color: red;

}Our simple Node & Express app is ready to entertain the world.

Running 24x7

Now that we have our application ready, how do we start it and keep it always running?

CentOS 7 comes by default with systemd as its init system. Actually almost all Linux flavors come with systemd so you will be able to use this everywhere.

Here is our simple systemd service:

[Service]

ExecStart=/usr/bin/node /opt/app/app.js

Restart=always

StandardOutput=syslog

StandardError=syslog

SyslogIdentifier=node-app-1

User=your_app_user_name

Group=your_app_user_name

Environment=NODE_ENV=production PORT=5000

[Install]

WantedBy=multi-user.targetAs you can see this small file tells systemd to restart the service when it dies, to use syslog for logging all output and to provide 5000 as a port, as well as a few other things.

Put this in /etc/systemd/system/node-app-1.service but don’t forget to replace your_app_user_name with the appropriate user name.

Then create one more file as the above in /etc/systemd/system/node-app-2.service with two minor differences. Instead of SyslogIdentifier=node-app-1 use SyslogIdentifier=node-app-2 and change PORT=5000 to PORT=5001

Then run the following to start both instances of our node application

$ systemctl start node-app-1

$ systemctl start node-app-2The first instance will be accepting requests at port 5000, where as the other one at port 5001. If any of them crashes it will automatically be restarted.

To make your node app instances run when the server starts do the following

$ systemctl enable node-app-1

$ systemctl enable node-app-2In case there are problems with any of the following commands above you can use any of these two:

$ sudo systemctl status node-app-1

$ sudo journalctl -u node-app-1The first line will show your app instance current status and whether it is running. The second command will show you all logging information including output on standard error and standard output streams from your instance.

Use the first command right now to see whether your app is running or whether there has been some problem starting it.

Re-deploying your app

With the current setup, if we have some new application code in our repository, all you have to do is the following

cd /opt/app

git pull

sudo systemctl restart node-app-1

sudo systemctl restart node-app-2And the latest version will be ready to serve your users.

Configure Nginx

Listening on ports 5000 & 5001 is nice but by default browsers are looking at port 80. Also in our current setup no static files are served by our application.

Here is our nginx configuration

upstream node_server {

server 127.0.0.1:5000 fail_timeout=0;

server 127.0.0.1:5001 fail_timeout=0;

}

server {

listen 80 default_server;

listen [::]:80 default_server;

index index.html index.htm;

server_name _;

location / {

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_redirect off;

proxy_buffering off;

proxy_pass http://node_server;

}

location /public/ {

root /opt/app;

}

}This configuration will make available all static files from /opt/app/public/ at the /public/ path. It will forward all other requests to the two instances of our app listening at the ports 5000 and 5001. Basically, Nginx is both a web server and load balancer.

To use this configuration save it in /etc/nginx/conf.d/node-app.conf and then in your /etc/nginx/nginx.conf file remove completely the default server section below the include /etc/nginx/conf.d/*.conf; line.

All you have to do now is to restart nginx for your latest configuration to take effect.

$ sudo systemctl restart nginxIf all works as expected, at the web address of your server you should see the following screen.

Where to go from here?

This is just the tip of the iceberg when hosting and deploying node applications.

One thing that can be improved is to create a new user specifically for the node app. This will make the application more secure, as it will have very minimal access besides what it needs.

Something else which you could do, and I think I would do in a future article, is take everything above and create an Ansible playbook out of it.

Ansible is a great tool to configure and orchestrate servers. It’s really simple. Using this playbook you will be able to launch & deploy even hundreds of servers.

Other articles that you may like